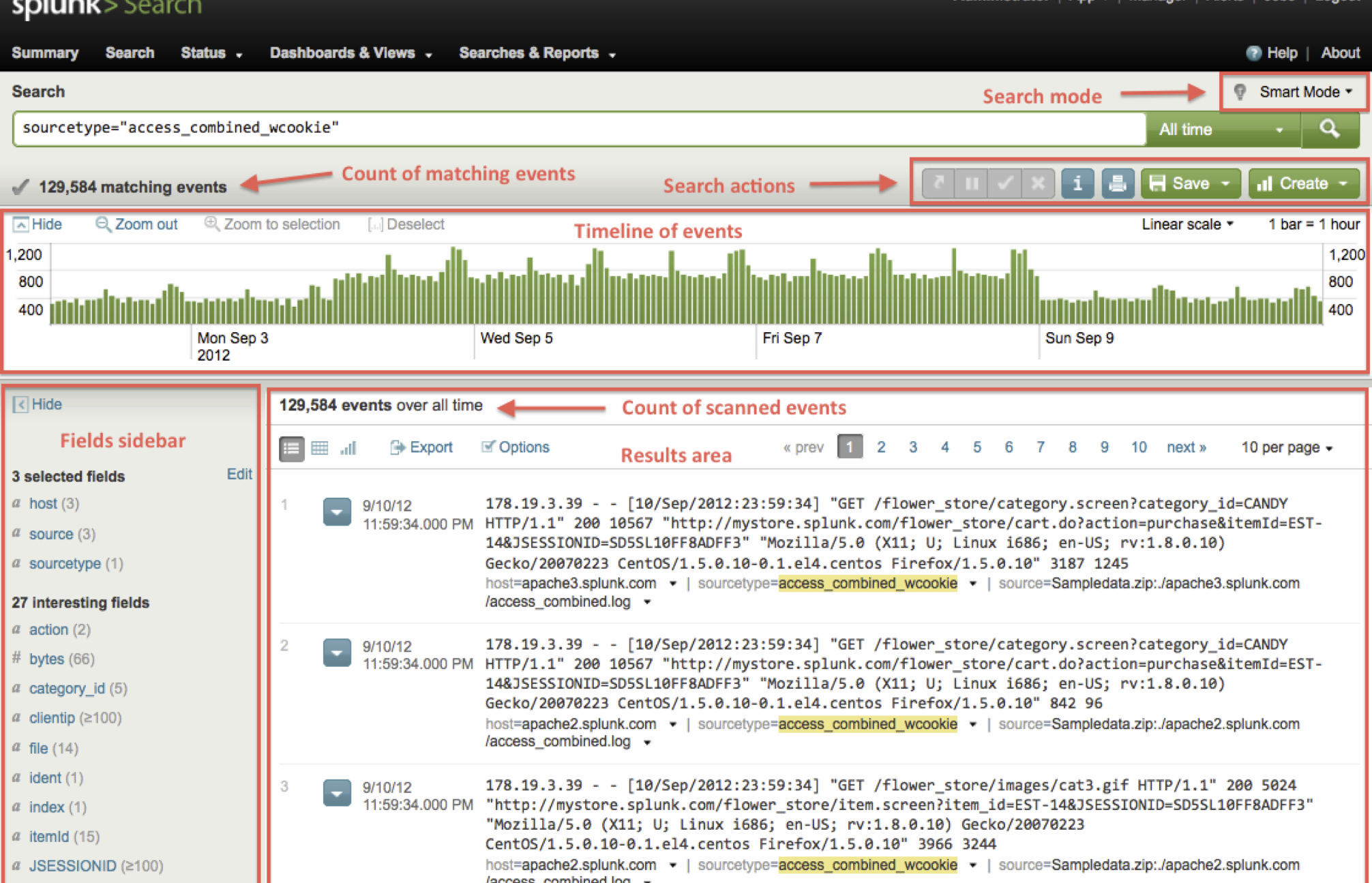

These headers are set to keep track of where the associated events originate from. It is quite common for API requests to include an X-Request-ID or X-Correlation-ID header. Searching also supports boolean operations such as AND, OR, XOR, NOT. We can see that these events only occurred during the first hour of each day. I can also see how many events occurred by the hour on the timeline just below the query. There were 31,586 events during that period. I happen to know that the log has the format: Now let’s say I was interested in how many failed attempts to log on occurred during the first seven days of March in my data. This prevents bloating of a single index and provides an efficient way to filter data when performing queries, searching only for the indexes that are relevant. Index=main sourcetype=vendor_sales/vendor_sales AcctID=6024298300471575Īlthough this example uses the ‘main’ index, it is better to use split indices by category of the data. Suppose that I wanted to search for events related to vendor sales for only account id ‘6024298300471575’ in March 2022, I could set the time window and run the following query string: See more about quick tips for optimisation from Splunk. You can reduce the time searching takes by: We can reduce the lookup time by choosing a smaller time frame on the left or limiting the number of results. It is good practice to keep our queries concise, otherwise the search could take a long time to run. This retrieves every event because data in Splunk is stored in the indexes. In the figure above I used the query index=* which will match the index field to a wildcard and set the time window to ‘all time’. Queries are made using Splunk’s Search Processing Language (SPL). If a data platform is designed to index any kind of data, then my logs should be in there somewhere. I have set up Splunk locally with some sample data from the official search tutorial.Ī benefit of using a Splunk deployment is that I don’t have to worry about finding where my data is stored once an application is set up to forward data to the indexers. You can also implement or install custom apps. Splunk comes with the search app by default for querying events. Searching with SplunkĪs a developer, the type of machine data I interact with most will naturally be logs output by systems. For this blog post, I have set up a free edition of Splunk Enterprise locally. You could set up Splunk manually with Splunk Enterprise, or use their managed solution Splunk Cloud Platform. I want to focus on what you could do with Splunk over the administrative side. This is a very simple overview of the Splunk architecture. Users can access Splunk via the web browser and roles can be assigned to control access.

Data is decoupled from the applications that produce it and it is centralised in a distributed system. Splunk can acquire data that is sourced from programs, or manually uploaded files. A search head distributes search requests to indexers and merges the results for the user.An indexer stores and manages the data.A forwarder is an instance that sends data to an indexer or another forwarder.The Splunk ArchitectureĪ Splunk system consists of forwarders, indexers, and search heads. My experience is mainly with Splunk, but the approaches I cover in this post should be applicable to alternative solutions, such as the ELK stack.

Disclaimer: I’m not at all a Splunk expert! That said, even with a rudimentary understanding I have found tremendous value in incorporating the use of Splunk into my daily workflow.

I will cover how we can use it to search and interpret data, generate reports and dashboards, as well as pointing out features that have been very helpful for me as a developer. In this blog post I want to give an introduction to Splunk. A data platform could give insight into many aspects of a system, including: application performance, security, hardware monitoring, sales, user metrics, or reporting and audits. Since Splunk is intended to index massive amounts of machine data, it has a large scope of use cases. The idea of Splunk is to be a data platform that captures and indexes all this data so that it can be retrieved and interpreted in a meaningful way. Systems generate a lot of machine data from activity such as events and logs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed